The AI chatbot is dead: welcome to agentic resolution

15 min read

—

💬 What is an AI chatbot?

An AI chatbot is a software application that uses machine learning (ML) and natural language processing (NLP) to simulate human conversation. In 2026, AI chatbots are being replaced by agentic AI systems that don’t just understand language – they take action.

Unlike traditional rule-based bots that follow fixed decision trees, AI chatbots interpret the intent behind a user’s message, generate contextual responses, and can handle a wider variety of phrasing and questions. Most AI chatbots are deployed on websites, mobile apps, or messaging channels to deflect FAQs, provide self-service support, and route users to human agents when needed.

In 2026, the biggest limitation of the typical artificial intelligence chatbot is not what it can read, but what it can’t do: it lives in a read-only world, answering questions without ever taking action on the underlying systems. If an agent can’t write back, it’s just a glorified search bar.

How AI chatbots work

Understanding how chatbots work is just the starting point. What really matters is what they can (and can’t) actually do for your customers.

NLP & intent detection (how chatbots understand)

AI chatbots rely on NLP to parse user messages, identify entities, and infer intent – a core part of modern chatbot meaning in customer service journeys. They tokenize the input, run it through a language model, and classify what the customer is trying to do: reset a password, check an order status, or ask about pricing.

LLMs have transformed these engines from brittle keyword matchers into systems that can handle slang, typos, and complex sentence structures far more reliably than previous-generation bots.

But intent detection is only half the story. The chatbot can understand that a user wants to change their shipping address or cancel a subscription; it still can’t directly perform that action. Understanding without execution is where expectations start to diverge from reality.

Knowledge base retrieval (how chatbots answer)

Once intent is detected, AI chatbots typically query a static knowledge base using search, dense embeddings, or retrieval-augmented generation (RAG). They pull relevant help center articles, FAQs, or internal docs, then summarize or rephrase that content into a conversational answer.

This works well for ‘how do I’ questions where an explanation is enough: How do I reset my password? or Where can I download my invoice?. However, it falls apart when the user is not asking how to do something, but wants it done for them. A chatbot that explains how to issue a refund, instead of just issuing it, creates friction rather than resolution.

The critical limitation: read-only architecture

Under the hood, most AI chatbots are read-only. They can read from your knowledge base and sometimes read from your CRM or ticketing system via APIs, but they rarely have safe, structured ways to write back.

While the benefits of chatbots are real for FAQ deflection, the read-only architecture caps what they can achieve. That means:

- They can look up an order status, but not reschedule delivery.

- They can check entitlements, but not upgrade a plan.

- They can see a ticket, but not actually resolve and close it.

The interface looks intelligent because the language model is fluent, yet the architecture keeps your support experience trapped at the Answers layer instead of moving into Actions.

Related article: For a deeper technical breakdown of AI chatbot technology and AI chatbot architecture, check out how AI chatbots work.

The three generations of AI chatbots

To understand why an AI chatbot is hitting a ceiling, it helps to place them into a simple three‑generation model of artificial intelligence chatbot evolution:

- Gen 1: Rule-based chatbots (search)

- Gen 2: AI chatbots (answers)

- Gen 3: Agentic AI (actions)

Gen 1 → Gen 2 → Gen 3 at a glance

In Gen 1, AI-powered chatbots were essentially decision trees with buttons. They matched keywords, followed predefined flows, and routed to a human when anything fell outside the script. Unlike conversational AI, chatbots lived squarely in the Search layer: slightly better than a static FAQ page, but just as brittle.

Gen 2 AI chatbots moved to LLMs and RAG, dramatically improving language understanding and answer quality. They pulled from your knowledge base and summarized content. However, they still lived in the Answers column. They could explain, but not execute.

Gen 3 agentic AI adds an entirely new axis: structured, safe, integration with your operational systems. Instead of just reading from your CRM, ticketing, or engineering tools, they can write back – updating records, resolving tickets, triggering workflows, and coordinating downstream systems.

Related article: See real-world chatbot examples in action across industries.

Why Gen 2 hits a ceiling

Most AI chatbots offered by major CX platforms today – Zendesk, Intercom, Salesforce and others – are Gen 2 systems sitting in the Answers column. They are excellent at understanding your question and retrieving a relevant answer, often for a fraction of the cost of a human agent.

But they can’t, by default:

- Update your CRM with new information.

- Close or reassign a support ticket.

- Create a Jira issue and link it to a customer conversation.

That’s not resolution – that’s a search bar with a personality layered on top of your knowledge base.

Industry analysts are seeing the same pattern. Gartner predicts that over 40% of agentic AI projects will be scaled back or canceled by the end of 2027. The pattern is consistent: projects fail not because the AI is incapable, but because the underlying data architecture isn’t solved first.

Without a live, connected knowledge graph, even the most advanced model is reduced to a smarter FAQ. Architecture beats interface every time.

Why AI chatbots fail at resolution

By this point, you understand the difference between an AI agent and chatbot and where a chatbot sits historically. Now comes the critical reveal: why that architecture fails when your goal is resolution, not deflection.

1. The deflection illusion

Standard AI chatbots are often sold on deflection rates. But when you dig into the data, a large chunk of this deflection is actually abandonment. The user saw a partial answer, got frustrated, and simply gave up.

Traditional self-service and early chatbot deployments have consistently featured poor user experiences that garner disappointing uptake, even as vendors report strong metrics.

Legacy AI chatbots often look successful in dashboards, but actual user adoption and effective self-service are much lower.

BILL, whose platform serves a member network of more than 8 million and processes approximately 1% of US GDP annually, had a self-serve rate of 3% before choosing DevRev’s AI teammate, Computer.

Pre Computer, BILL’s chatbot looked successful because it reduced agent handle time, but from the customer’s perspective, nothing meaningful had changed.

With Computer, their resolution rates went above 70%. Not deflection. Resolution. Issues actually solved. Customers actually helped.

2. Session death and context loss

Most AI chatbots operate in a single-session context. Once the chat window is closed, the conversation state is effectively gone or at least not reused meaningfully the next time the customer returns.

That leads to the familiar pattern:

- The customer explains their issue to the chatbot.

- Conversation times out or gets escalated.

- A human agent joins and asks the customer to repeat everything.

Over 80% of customers expect bots to escalate the interaction to a human when needed, but only 38% say it happens.

The frustration is visible across the industry: high bounce rates on help content, customers re-opening tickets, and repeated explanations in multi-channel journeys. Even this page, a resource ABOUT chatbots, sees nearly half its visitors leave before finding what they need. It’s a symptom of the stateless experience problem.

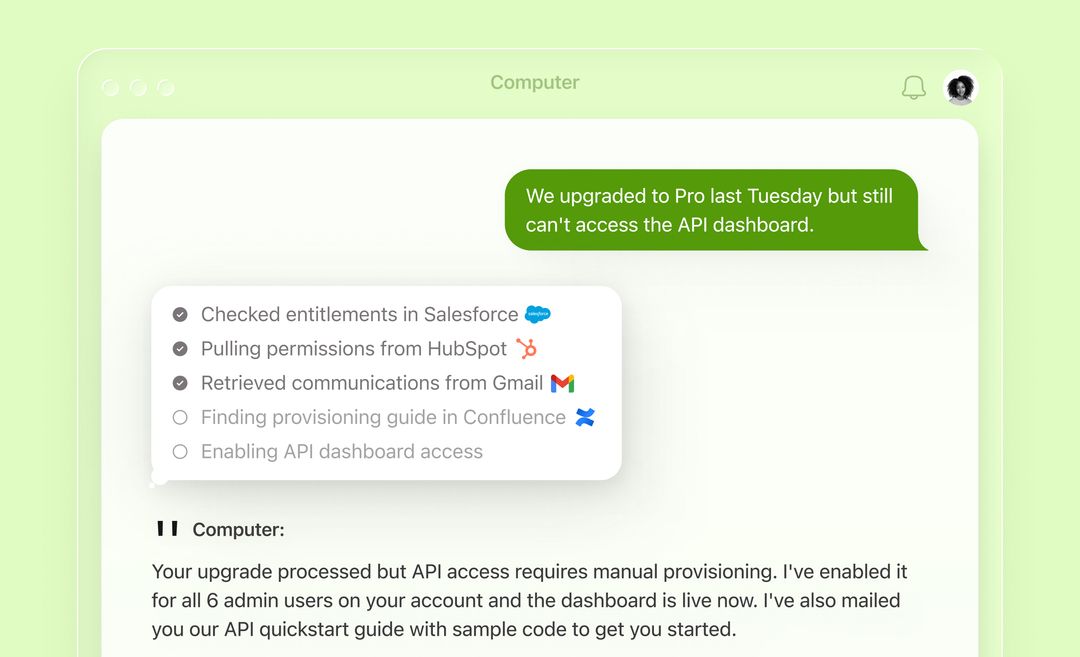

3. The read-only trap

The fundamental reason AI chatbots fail at resolution is the read-only trap. They can:

- Tell a customer their order is delayed.

- Confirm that a feature is not available on their current plan.

- Explain how to file a bug report.

But they can’t:

- Reschedule the delivery automatically.

- Upgrade the plan and pro-rate the billing.

- Create and link an engineering issue with full context.

That requires write-back. Chatbots don’t have it.

Every action still requires a human to step in, interpret the situation, and operate the underlying systems. The chatbot software becomes a thin conversational layer on top of the status quo.

Competitors in the Gen 2 space show how hard this ceiling is: CRM-native assistants inherit a read-heavy, CRM-first architecture that caps practical resolution rates around 20-30% on eligible intents. These aren’t failing products – they’re just operating at the ceiling of the AI chatbot generation.

See how Computer moves beyond deflection → [Interactive demo]

What replaces the AI chatbot: agentic resolution

If you're evaluating chatbot platforms and your primary goal is resolution - not just deflection - the question is no longer which chatbot to buy. It's whether a chatbot is the right architecture at all.

If Gen 2 AI chatbot systems live in the Answers column, Gen 3 agentic AI lives in Actions. The shift is not slightly better chatbots; it is a different architecture built around resolution instead of deflection.

A simple way to frame this is the Search → Answers → Actions framework:

- Search: Gen 1 chatbots, decision-tree flows, keyword matching against FAQs.

- Answers: Gen 2 AI chat tools and assistants, LLM-powered understanding plus KB retrieval.

- Actions: Gen 3 agentic AI, reasoning over a live knowledge graph.

From indexed KBs to a live knowledge graph

Traditional AI chatbots index content: they crawl help center articles, documents, and internal FAQs, then build embeddings to support retrieval. That index is powerful for answering questions, but it is still a snapshot: it doesn’t represent live system state or relationships.

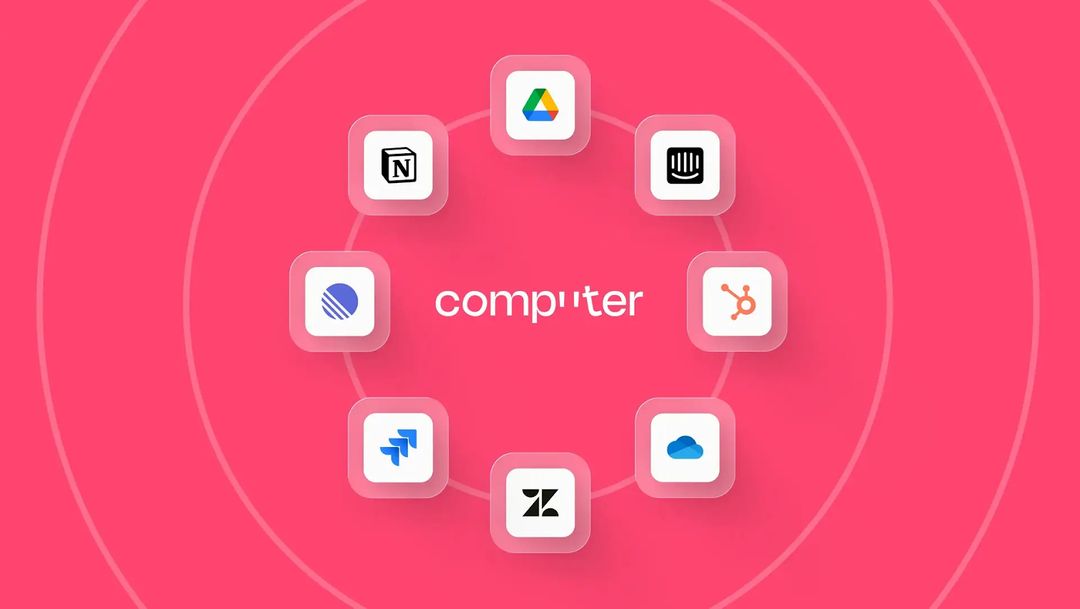

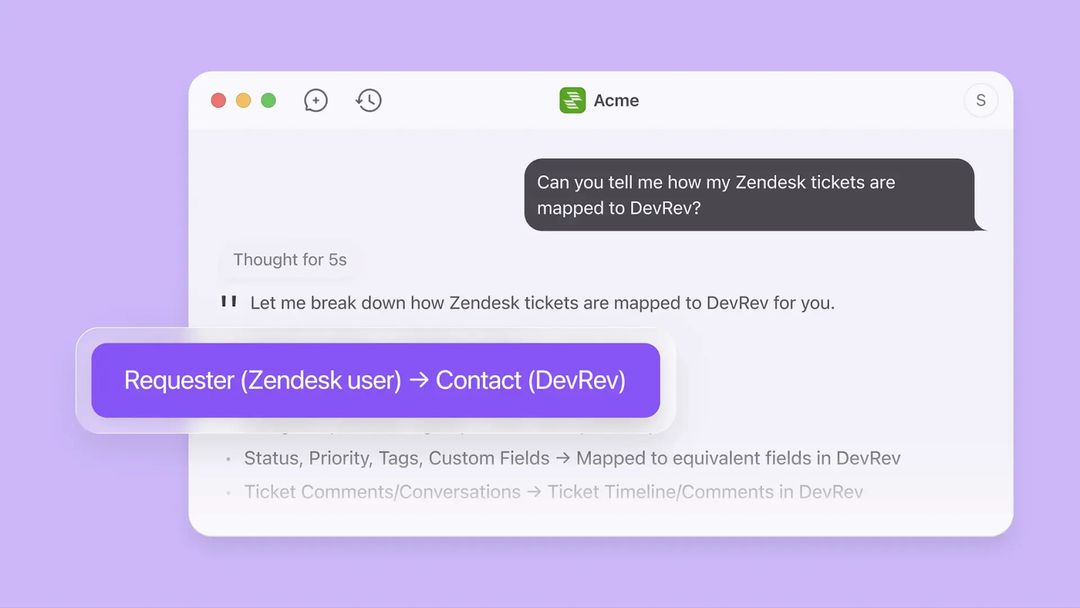

DevRev’s AI teammate, known as Computer, moves from static indexes to a live, permission-aware knowledge graph.

Computer Memory

Computer memory continuously synchronizes with your operational systems. Instead of just knowing that Plan X includes Feature Y, the graph knows which customers are on which plan, which issues they’ve reported, and which engineering work items are linked to those issues.

AirSync

At the heart of this architecture is AirSync that reads and writes back to your systems.

- It reads customer records, tickets, and issues to understand context.

- It writes updates back when an agent resolves an issue, modifies a record, or triggers a workflow.

When a customer asks to change billing details, the agent can validate, execute the update in the billing system, and log the action–all without human intervention, within guardrails you define.

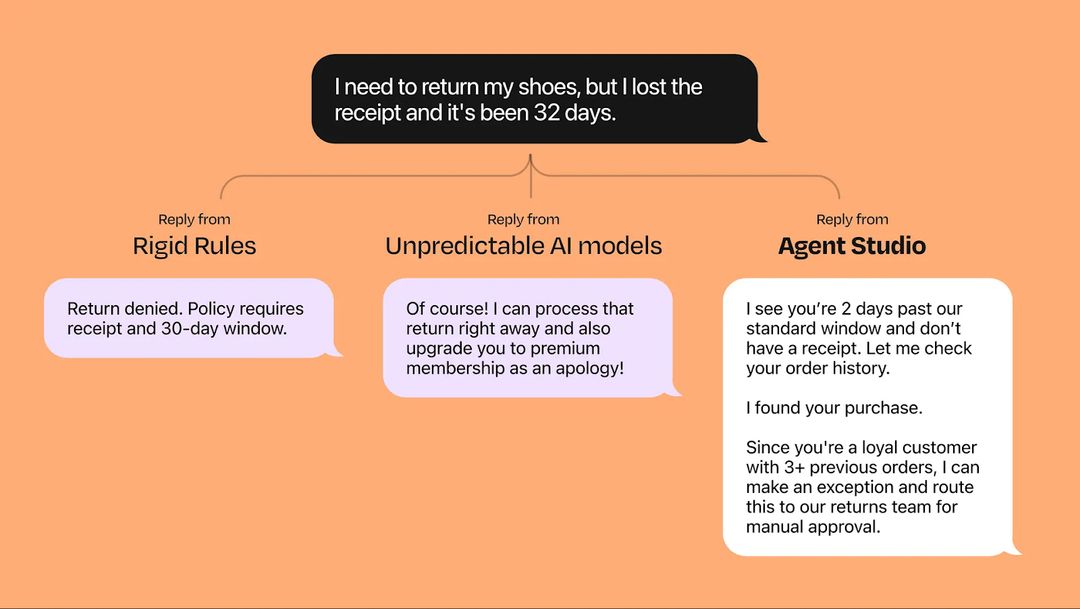

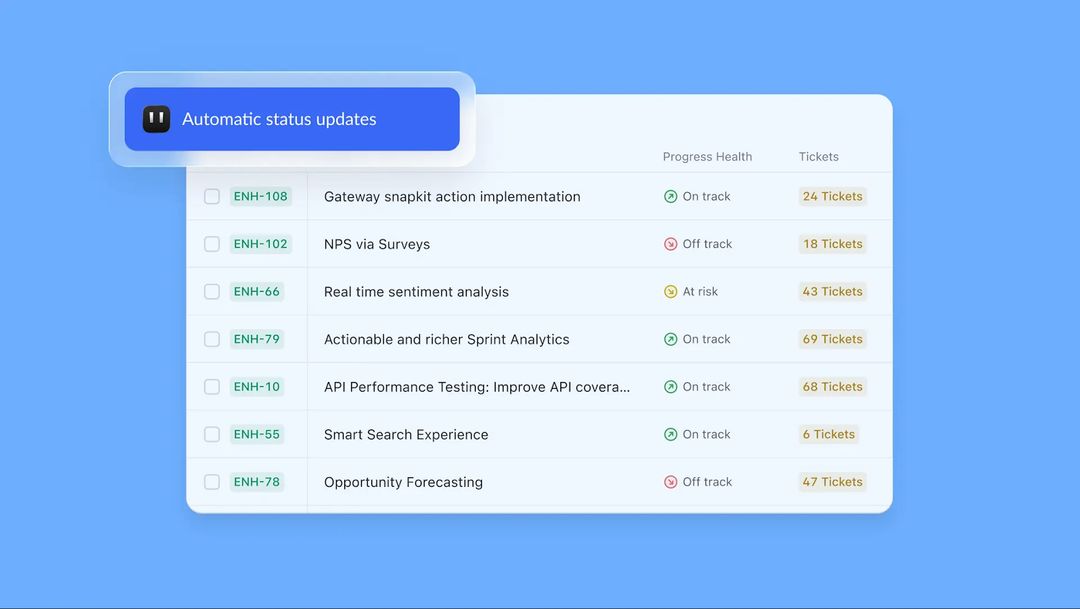

Agent Studio

Legacy chatbot software builders focus on conversation design: drag-and-drop flows, decision branches, and scripted intent handling. Agent Studio flips this: you build resolution flows, not conversational tree diagrams.

Examples:

- If a customer reports a failed payment, attempt automated retry, check dunning rules, and send a status update.

- If an IT request for password reset meets policy, automatically reset, notify the user, and close the ticket.

You define outcomes, constraints, and guardrails; custom agents figure out the steps.

Stateful memory

Agentic resolution also requires stateful memory. Instead of treating each chat as a fresh, isolated event, Computer remembers prior interactions, tickets, and key attributes across channels and over time.

That looks like:

- Recognizing returning users and continuing where they left off.

- Automatically incorporating past tickets, account history, and entitlements into decisions.

When you bring these pieces together – a live knowledge graph, AirSync, an Agent Studio for outcome-centric workflows, and stateful memory – the very question ‘which chatbot software should I buy?’ changes. If you’re evaluating chatbot software or AI chat platforms, the first question is no longer ‘which AI chatbot is best’, but ‘do I need a chatbot at all’, or ‘do I need agentic resolution?’

Proof points: what happens when you replace a chatbot

Skeptical CX and IT leaders don’t buy architecture diagrams; they buy numbers. When organizations move from Gen 2 AI chatbot deployments to agentic resolution with platforms like Computer, the impact shows up in hard metrics: resolution rates, savings, and scalability.

Real-world outcomes

BILL, Bolt, and Descope, illustrate the shift from deflection-first to resolution-first, turning classic chatbot use cases into end-to-end automated resolution flows:

BILL’s support leaders thought their AI chatbot was doing its job. Dashboards glowed with healthy deflection rates; volumes looked under control. But when they finally audited what self-serve really meant, the truth was blunt. Most successful sessions were customers dropping off after a partial answer.

Turning on Computer felt less like a new tool and more like flipping the lights on. Workflows were wired directly into billing, accounts, and support systems, so when customers came in with everyday problems – billing questions, plan changes, account issues – the system didn’t just explain what to do, it did the work.

For the team, the shift was visceral: fewer queues, fewer repetitive tickets, and far more time for genuinely complex cases.

"The migration was seamless and efficient, and the DevOps side was notably easy. Within just two weeks, we successfully imported around 200,000 Zendesk tickets and 800 knowledge base articles along with 12 workflows."

Elec Boothe

Director of Support Engineering & Risk, Bolt

Descope shows what happens when you build on this foundation. As usage exploded from 10M to over 300M sessions, support headcount stayed flat. Resolution time fell by 54%, tickets moved 5x faster, SLAs stayed at 100%, and 54% of inquiries were resolved through AI‑powered self‑service.

"For me, this is the number one priority for our service motion today: make sure that the product provides self-service and guidance at the scale that we need to support a PLG motion. DevRev’s API-first and extensible platform has enabled us to do exactly that—automating customer success and empowering our users to solve problems independently."

Gilad Shriki

Co-Founder @ Descope

Across customers adopting agentic architectures, you see up to 85% resolution on clearly automatable L1 intents and roughly 4× productivity improvements for human teams who now focus on complex, judgment-heavy work. These are resolution rates, not deflection rates. The difference matters.

See how Computer delivers these results for your team → [Book a demo]

Use cases for AI chatbots vs agentic AI

AI chatbots still have a role. For purely informational questions where a quick answer is enough, Gen 2 ai chatbots are fine. Agentic AI matters when the real goal isn’t an explanation, it’s getting the job done – this is where the more advanced chatbot use cases start to show up.

1. Customer support resolution (primary)

Resolves billing issues end-to-end without human intervention, turning support into an automation engine.

- Chatbot version: The user asks about a failed payment; the bot explains why payments fail and links to an article. That’s a classic chatbot use case: it tells you what to do, but you still have to do it yourself.

- Agentic resolution: Computer inspects the invoice, attempts retry if allowed, updates billing status, and confirms the outcome to the user – then logs everything back into CRM and support tools.

In B2C and B2B support, this shift transforms support from a cost center into an automation engine.

2. Service desk automation (IT/HR)

Handles repetitive IT and HR requests autonomously, from intake to resolution.

- Chatbot version: The employee reports a locked account; the bot routes the ticket to IT with a short summary.

- Agentic resolution: Computer validates identity and policies, resets the password, unlocks the account, notifies the employee, and closes the ticket automatically, with up to 60% of repetitive IT and HR requests handled end-to-end.

McKinsey shows that gen AI in services delivers major gains when embedded in workflows, not just front‑end chat, cutting handling times and automating large portions of service workloads.

3. Agent assist

Cuts assisted ticket resolution times through one-click approval of fully drafted responses and actions.

- Chatbot version: A side-panel assistant suggests canned replies and article links to a human agent.

- Agentic resolution: Computer drafts a complete response, executes the necessary backend actions (refunds, adjustments, updates), and presents the agent with a one-click approval flow, cutting resolution times by roughly 40% on assisted tickets.

For internal teams, this turns a routine chatbot use case into a fully automated workflow.

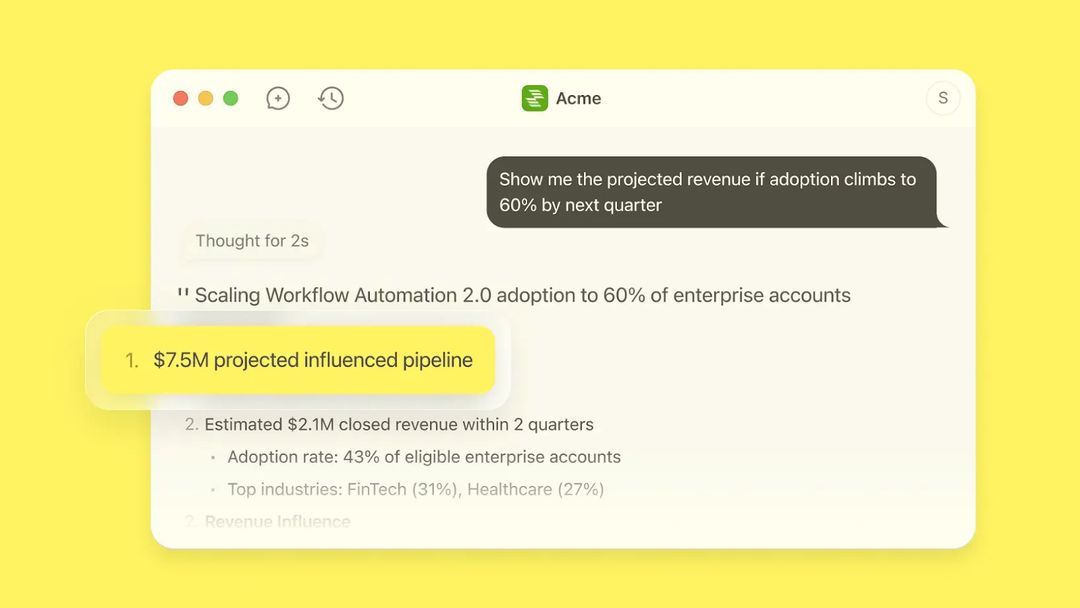

4. Sales and revenue operations

Accelerates lead handoff by auto-enriching CRM and routing qualified prospects to the right rep with full context.

- Chatbot version: A visitor asks about pricing; the bot qualifies them and suggests Talk to sales.

- Agentic resolution: Computer captures key signals, updates CRM, adds opportunity context, and routes the lead to the right rep with a concise summary of intent and product interest.

How to evaluate chatbot software in 2026

By now, the question ‘which AI chatbot is best?’ needs a qualifier: best for what?

If you’re evaluating chatbot software or AI chat platforms today, you’re really choosing between Gen 2 chatbots and Gen 3 agentic AI.

In a Gen 2 world, the goal is to minimize agent time spent answering routine questions. In a Gen 3 world, the goal is to maximize genuine, autonomous resolution and reorient humans toward high-value problems.

The framework above sets up Computer as the only option that checks all Gen 3 boxes.

Ready to move beyond chatbots? See Computer in action → [Book a demo]

Frequently Asked Questions

Related Articles

Krithika Anand

Mathangi Srinivasan

Sayali Kamble

![Customer data protection: 12 ways to keep your data safe [2025]](https://cdn.sanity.io/images/umrbtih2/production/5015bdfe66cd100dc2a2d389b9c40f1cf8d2f051-2016x1008.webp?w=672)

Stalia

Computer+ Apps

Our customers

Resources

Initiatives